You would be surprised to know that even small errors in your SEO strategy could have larger and costlier impacts. There might be situations where you could have completely messed up and the problem might have gone beyond your control. The situation is really scary and to fix it, you would need to work really hard and fast.

You would be surprised to know that even small errors in your SEO strategy could have larger and costlier impacts. There might be situations where you could have completely messed up and the problem might have gone beyond your control. The situation is really scary and to fix it, you would need to work really hard and fast.

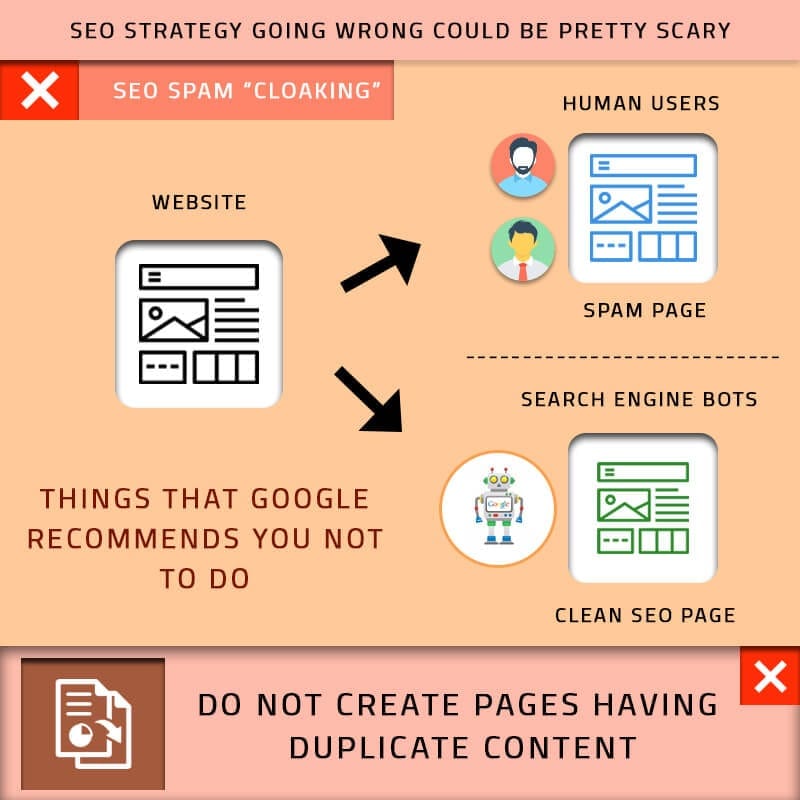

A quick checklist of the things that Google recommends you not to do:

- Using spinning tools to generate content automatically

- Participate in any link schemes

- Create pages having duplicate content

- Cloaking

- Apply sneaky redirects

- Use hidden texts or links

- Include doorway pages

- Include scraped contents

- Participate in any affiliate programs without any sufficient value

- Creating pages with irrelevant keywords

- Create pages that characterize any malicious behavior

- Using abusive rich snippets

- Sent automated queries to Google.

However, despite knowing each and every points mentioned above, people believe that content spinning for avoiding plagiarism is not a bad option. Many people believe that having links are good and end up trading links with other webmasters. Some might even see review stars and get trapped of using such services to stand out over the search engine rankings. But none of the above is a good idea.

Crawling & Indexing Issues

User-agent:

Disallow: /

The above text in the robots.txt files is all that could block the crawlers from crawling your website. This usually gets initiated in the development phase but the moment you see this, you will definitely feel the pain. In case the website was already indexed by the search engines, the following error can be seen over the SERPs:

“A description for this result is not available because the site’s robots.txt

learn more”

Similarly, the no index meta tag can prevent a page from getting indexed. This is a situation that can be enabled by simply a tick of the button. It’s one of those mistakes that one might make very easily and could be very painful indeed. UTF-8 BOM is one of the other elements that can have drastic impacts over the SERPs for your website.

One of the other things to note is the fact that if a large portion of traffic on your website comes from the same IP, it is not a bad thing. There are users who end up blocking some IP addresses that were used by Googlebot for crawling the website after being convinced that those IPs were not good for the website. Adding to this list of horrific situations, there are people who block the crawlers for getting the pages out of the index after they migrate subdomains. It is not a good idea at all as the crawlers require access to the old versions and follow the redirects to the newer versions. It could be even worse if the robots.txt file had been shared by both the subdomains and crawlers were unable to see either the old or the new pages due to the block.

Manual penalties

The penalty is definitely a word that no one of us likes and reading that can be real scary. This is a clear indication that you or someone who has been managing the site did something wrong. Common issues as listed by Google includes:

- A hacked website

- Spam generated by the user

- Spammyfreehosts

- Unnatural link building

- Thinner content that has no value

- Sneaky redirects

- Cloaking

- Unnatural links going out from your website

- Hidden texts

- Keyword Stuffing

Most of the penalties mentioned above are directly a consequence of a shortcut that was adopted to benefit the website. With Penguin operating on a real time basis, there are chances of manual penalties to be applied very soon. An example could be a situation wherein in a company plans to re-brand and migrate to a new website but the new website being a pure spam penalty.

Other errors may be while rebuilding a website where many people do not do the redirects properly. One may even delete the good quality content that` had been there on the website before. Another common error might be when one overwrites the disavow files especially in a situation where a copy has not been made. Overall there are many things that can have grave repercussions on your website. Minor mistakes or small decision can turn out to be scary as well as costly.